By: Noor Falih, Suhono Harso Supangkat, Fetty Fitriyanti Lubis

Robotic Process Automation (RPA) is a business process automation and is seen as a technological innovation in the field of computer science and information technology. Its main objective is to replace human tasks with virtual workforce or digital workers that perform the same tasks as humans with the assistance of software robots. For companies that will implement RPA, one of the main challenges is to understand where to apply RPA. Identifying processes for RPA is crucial to ensure that RPA is used appropriately and makes a positive difference to the business. By identifying processes, complex tasks that are not suitable for automation using RPA can be eliminated.

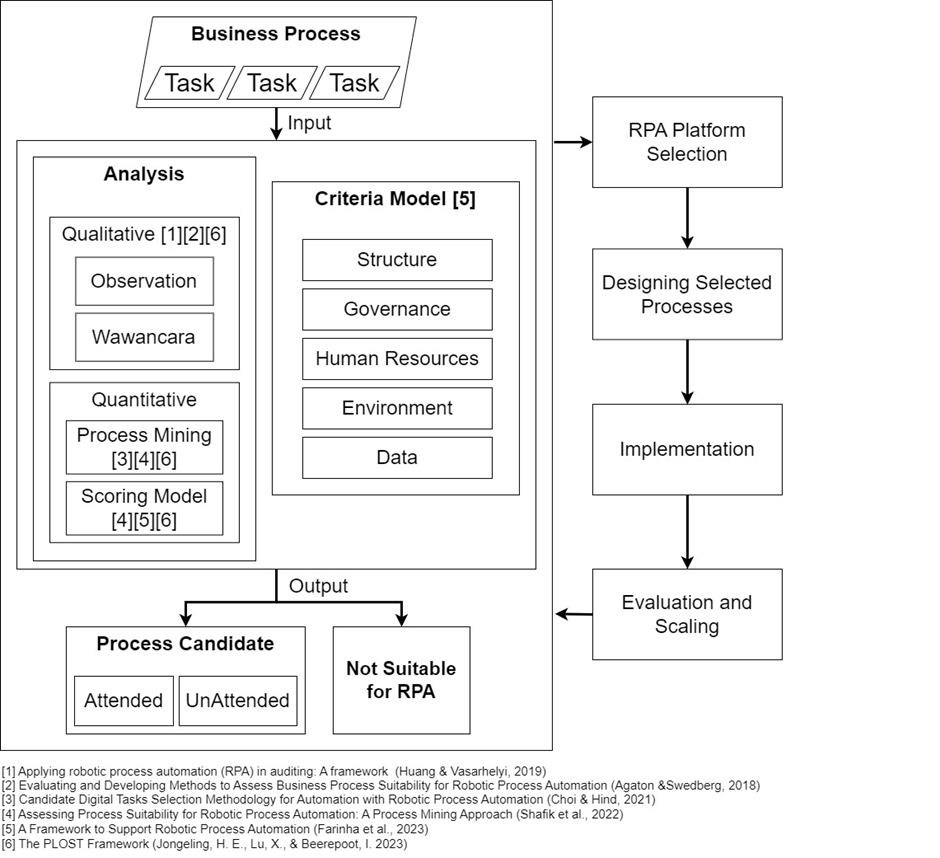

The current frameworks only focus on the process side, and the proposed criteria do not include the classification of attended and unattended processes, and the analysis is done qualitatively, such as relying solely on interviews. As a result, the generated process candidates are not objective since crucial information about the processes might be missed. On the other hand, quantitative analysis using scoring models that exists are still subjective and heavily dependent on expert judgment. Process mining techniques can be applied to obtain information about process performance. However using process mining offers disadvantages, it can be time-consuming due to the collection of vast amounts of process data and activity logs. By adding qualitative examination before applying quantitative analysis, time can be saved as process mining is only applied to relevant processes. Additionally, specific guidelines are required to overcome the issue of missing activity logs for a business process that needs process mining.

A framework is proposed to integrate quantitative and qualitative methods to identify suitable process/task candidates for Robotic Process Automation (RPA) that can address the issue of missing activity logs for a business process requiring process mining and classify attended and unattended processes. The framework is evaluated by implementing it using existing case studies and involving experts through the Thinking-Aloud Experiment method to ensure whether the proposed framework operates effectively and efficiently in terms of usability, feasibility, and reusability.